dream-textures - Stable Diffusion built-in to the Blender shader editor

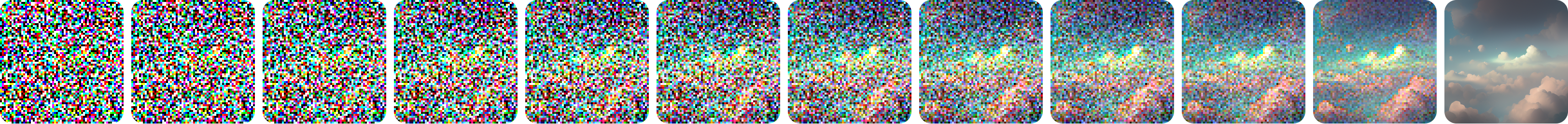

- Create textures, concept art, background assets, and more with a simple text prompt

- Use the 'Seamless' option to create textures that tile perfectly with no visible seam

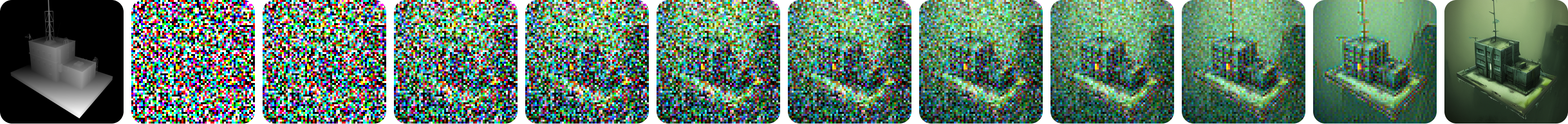

- Texture entire scenes with 'Project Dream Texture' and depth to image

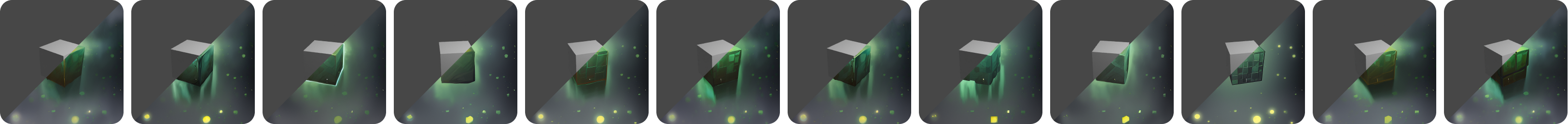

- Re-style animations with the Cycles render pass

- Run the models on your machine to iterate without slowdowns from a service

Installation

Download the latest release and follow the instructions there to get up and running.

On macOS, it is possible you will run into a quarantine issue with the dependencies. To work around this, run the following command in the app

Terminal:xattr -r -d com.apple.quarantine ~/Library/Application\ Support/Blender/3.3/scripts/addons/dream_textures/.python_dependencies. This will allow the PyTorch.dylibs and.sos to load without having to manually allow each one in System Preferences.

If you want a visual guide to installation, see this video tutorial from Ashlee Martino-Tarr: https://youtu.be/kEcr8cNmqZk

Ensure you always install the latest version of the add-on if any guides become out of date.

Usage

Here's a few quick guides:

Setting Up

Setup instructions for various platforms and configurations.

Image Generation

Create textures, concept art, and more with text prompts. Learn how to use the various configuration options to get exactly what you're looking for.

Texture Projection

Texture entire models and scenes with depth to image.

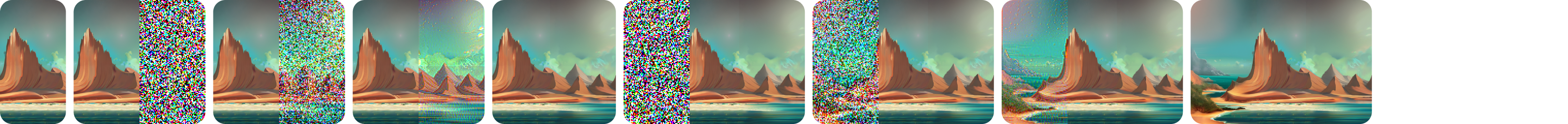

Inpaint/Outpaint

Inpaint to fix up images and convert existing textures into seamless ones automatically.

Outpaint to increase the size of an image by extending it in any direction.

Render Pass

Perform style transfer and create novel animations with Stable Diffusion as a post processing step.

AI Upscaling

Upscale your low-res generations 4x.

History

Recall, export, and import history entries for later use.

Compatibility

Dream Textures has been tested with CUDA and Apple Silicon GPUs. Over 4GB of VRAM is recommended.

If you have an issue with a supported GPU, please create an issue.

Cloud Processing

If your hardware is unsupported, you can use DreamStudio to process in the cloud. Follow the instructions in the release notes to setup with DreamStudio.

Contributing

After cloning the repository, there a few more steps you need to complete to setup your development environment:

- Install submodules:

git submodule update --init --recursive- I recommend the Blender Development extension for VS Code for debugging. If you just want to install manually though, you can put the

dream_texturesrepo folder in Blender's addon directory. - After running the local add-on in Blender, setup the model weights like normal.

- Install dependencies locally

- Open Blender's preferences window

- Enable Interface > Display > Developer Extras

- Then install dependencies for development under Add-ons > Dream Textures > Development Tools

- This will download all pip dependencies for the selected platform into

.python_dependencies

Tips

- On Apple Silicon, with the

requirements-dream-studio.txtyou may run into an error with gRPC using an incompatible binary. If so, please use the following command to install the correct gRPC version:

pip install --no-binary :all: grpcio --ignore-installed --target .python_dependencies --upgrade